Unix Epoch — timestamp

For me, the most common situation is converting a Unix Epoch timestamp to a “datetime” object. Such a conversion can be done using the to_datetime function.

Table of Contents

pd.to_datetime(1539527952)

1.5 second past the beginning of the Unix Epoch? It was supposed to be 14 October 2018 14:39:12 UTC. What went wrong? I must specify the unit because by default the to_datetime function assumes that the given values are in nanoseconds.

pd.to_datetime(1539527952, unit = 's')

Parse a string as a datetime

That was easy, what if we have time in some weird format and still want to parse it? Let’s parse: 05/07/10. What is that? I have no idea. It can be anything. May 7, 2010? July 10, 2005? 5th of July, 2010? 7th of October 1905? People are incredibly creative and do everything they can to avoid formatting time in a way that is compliant with ISO8601 ;)

pd.to_datetime('05/07/10')

The default output is quite obvious if we get used to the fact that everything by default uses the US time format. Let’s assume that the String “05/07/10” means 2010–07–05. How do we get that value?

pd.to_datetime('05/07/10', format = '%d/%m/%y')

Error handling

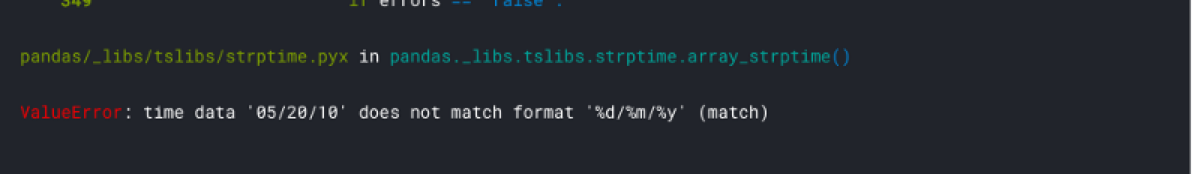

What if I have a column of such texts and I want to parse them all? I can pass the column as the argument, but what happens if there is an invalid value?

dates = pd.DataFrame(['05/07/10', '10/07/10', '21/09/11', '05/20/10', '01/01/10'])

pd.to_datetime(dates[0], format = '%d/%m/%y')

We can decide to ignore errors or return an empty value in case if an error. In my opinion, the second option is better:

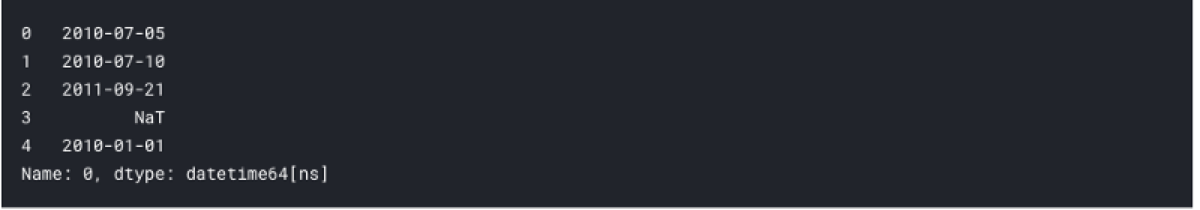

dates = pd.DataFrame(['05/07/10', '10/07/10', '21/09/11', '05/20/10', '01/01/10'])

pd.to_datetime(dates[0], format = '%d/%m/%y', errors = 'coerce')

Days of week

I have parsed text as a date. Great. What if I am building an ML model and I think that the day of week influences the value of the dependent variable? I must somehow extract the day of the week from the datetime object! Fortunately, that is the easiest part of this tutorial ;)

pd.to_datetime(1539527952, unit = 's').dayofweek

pd.to_datetime(1539527952, unit = 's').day_name()

Time zones

Nobody likes that, but sometimes we must deal with time zones.

The Timestamp object returned by the to_datetime() function has no time zone. Because of the way the Unix Epoch is defined the output can be interpreted as a time in the UTC time zone, but we can change that.

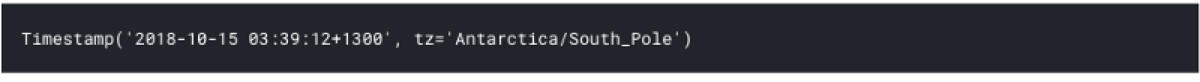

pd.to_datetime(1539527952, unit = 's').tz_localize('Antarctica/South_Pole')

Note that the code above did not recalculate the time to match the specified time zone. It replaced the time zone parameter with the given one and left everything else unchanged. Now, the value points to a different time, and we can easily prove that:

pd.to_datetime(1539527952, unit = 's').tz_localize('Antarctica/South_Pole').timestamp()

Do you see the difference between the input timestamp and the output? How can we do the proper time zone recalculation? First, we must set the origin time zone of the parsed time. We already know how to do that. As the second step, we must convert the time to the time zone we want.

pd.to_datetime(1539527952, unit = 's').tz_localize('UTC').tz_convert('Antarctica/South_Pole')